Understand the Deep Web from a technical perspective: architecture, protocols, segmentation, risks, compliance, and best practices for secure corporate environments.

Check it out!

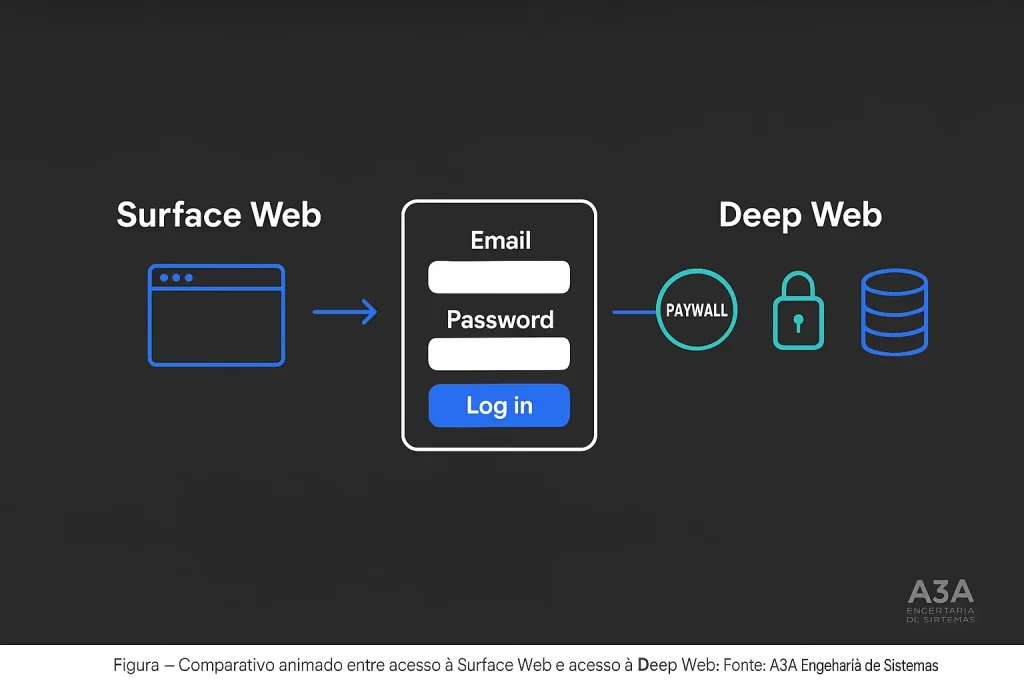

The Deep Web represents a fundamental and strategic layer of the architecture of networks and web systems. It includes all content, data, and systems not indexed by traditional search engines, meaning everything beyond the reach of conventional browsers and search tools such as Google.

The Deep Web covers everything from information accessible only through authentication, paywalled content, and isolated pages without public links, to dynamic data generated by applications and institutional portals. Its operation involves specific requirements related to protocols, credentials, and advanced security policies.

For professionals in engineering, networking, telecommunications, and information security, understanding the Deep Web universe is essential to ensure privacy, anonymity, and the protection of strategic data. The challenges range from logical segmentation of environments to the adoption of sound security practices, making it necessary to master normative approaches, continuous updating, and effective risk mitigation policies.

In this article, we discuss the technical foundations of the Deep Web, its architecture, access protocols, web segmentation, integration with network infrastructures, security implications, associated threats, and recommendations for protecting systems and data. The goal is to provide a comprehensive perspective aligned with engineering best practices and regulatory standards, aimed at professionals seeking solid references for decision-making, project integration, and risk mitigation.

Let’s dive in.

[elementor-template id=”24446″]

What Is the Deep Web? Technical Foundations and Applications

The Deep Web is the entire portion of the internet that is not indexed by search engines such as Google or Bing. It includes internal databases, corporate systems, academic portals, and pages that require authentication or restricted access. In other words, it is made up of protected content that is not openly accessible to the general public.

Institutional technical illustration highlighting the concept of the Deep Web, its layers, corporate examples, and digital protection elements.

Source: A3A Engenharia de Sistemas.

The Deep Web is composed of all digital resources, information, and services that are not indexed by conventional search engines.

This includes dynamic content generated by applications, protected databases, institutional portals accessible only through authentication, paywalled services, standalone pages without external links, non-HTML content, and multi-level files available only with proper credentials.

The growth and diversification of this infrastructure accompany the maturation of internet architecture, maintaining areas that are intentionally or accidentally hidden from public indexers.

- Dynamic Content: Generated in real time through database queries or application interfaces.

- Confidential Documents and Data: Stored in corporate, academic, or government repositories and requiring strong authentication.

- Isolated Pages: Resources without public hyperlinks, making automated indexing difficult.

- Paywalled Content: Access conditioned on payment or subscription, deliberately restricting indexation.

Contrary to common misconceptions, the Deep Web is not synonymous with criminal or illicit infrastructure. That classification is closer to the so-called Dark Web, which is a specific subset of deep infrastructure, accessed through anonymising protocols and commonly associated with clandestine flows.

“The Deep Web is not a dark territory, but rather the universe of strategic data and internal systems that sustain companies, universities, and public institutions. True digital security begins with rigorous management of these non-indexed environments, ensuring privacy, compliance, and operational resilience.”

Altair Galvao, Electrical Engineer, Head of A3A Engenharia de Sistemas

How Does Deep Web Architecture Work and What Are Its Layers?

| Characteristic | Surface Web | Deep Web | Dark Web |

|---|---|---|---|

| Visibility | Indexed by search engines (Google, Bing, etc.) | Not indexed by search engines | Not indexed, restricted and anonymised access |

| Access | Public and unrestricted through traditional browsers | Restricted: login, authentication, paywall, specific configurations | |

| Only through dedicated software (e.g. Tor, I2P) | |||

| Content | Institutional websites, public portals, blogs, news | Internal databases, intranets, corporate platforms | Anonymous marketplaces, forums, whistleblowing channels |

| Level of Anonymity | No | Variable, according to access controls | High, architecture designed for anonymity and secrecy |

| Legality | Fully legal | Legal, except in cases of illicit use | Depends on use and accessed content |

| Examples | www.a3aengenharia.com.br, official public websites | A3A Engenharia intranet, banking systems, academic environments | .onion addresses, anonymous communication services |

Source: A3A Engenharia de Sistemas.

Segmentation Model

- Surface Web: Composed of pages indexed by search engines such as Google. It uses standard HTTP/HTTPS protocols and refers to open access.

- Deep Web: A layer of non-indexed resources that may include everything from internal corporate systems to academic databases. Access normally depends on authentication, authorisation, and differentiated transport protocols.

- Dark Web: An even more restricted subset, accessed with specialised browsers such as Tor, using its own addressing and encryption mechanisms to provide extreme anonymity.

The segmentation model aims to define levels of visibility, accessibility, and privacy according to the logical and physical architecture of information systems. The technical distinction is based on a functional contrast: while the Surface Web is characterised by exposure and indexability, the Deep Web is defined by the opposite characteristics.

Access Structures

- Authenticated Request: Requires credentials validated by authentication servers.

- Dynamic Content Generation: Data displayed through queries that depend on time-based parameters, filters, and access policies.

- Logical Isolation: Pages, files, and services without explicit links in the open hyperlink structure.

Deep Web Protocols and Infrastructure: How to Access Securely?

Source: A3A Engenharia de Sistemas.

Deep Web operations involve application-layer protocols, secure transport, segmented routing, and integration with robust authentication and authorisation systems. Structures such as VLANs, corporate VPNs, virtual private networks, and access-control systems based on multi-factor authentication (MFA) sustain much of the traffic and access to these environments.

- Protocols: HTTP/HTTPS, SOCKS5 (especially in anonymising environments), TLS for encrypted transport, and specific ports for hidden services.

- Routing: Integration of private and public backbones using advanced logical and physical segmentation mechanisms, structured cabling compliant with technical standards, and strict management of external network interfaces through secure demarcation points.

Typical Topologies

The network architecture of the Deep Web usually comprises external network interfaces, protected entry infrastructure, and segregated access channels. Physical interconnection points follow structured cabling technical standards, including aerial routes, underground cabling, inspection boxes, and mechanical protection methods to prevent interception or sabotage.

In corporate environments, integration of the Deep Web with public networks is carried out in a segmented way through advanced firewalls, intrusion-prevention systems, persistent access-control lists, and federated authentication, ensuring secure interoperability without undue exposure of sensitive data.

Deep Web data-protection best practices follow internationally recognised recommendations such as ISO/IEC 27001 (Information Security Management), ISO/IEC 27002 (Security Controls), and ISO/IEC 27005 (Risk Management). These standards guide continuous updating policies, the use of advanced encryption, and segmented access controls, all of which are essential for non-indexed environments.

Risks, Threats, and Privacy in the Deep Web: How to Protect Yourself?

Threats and Risk Vectors

- Data Interception: Deep Web traffic, if not properly encrypted, may be targeted by sniffing, compromising sensitive information.

- Spoofing: Risks of fraudulent operations simulating legitimate entities, leading to unauthorised acquisition of credentials or data.

- Attacks on Authenticated Systems: Attempts to exploit vulnerabilities in authentication and authorisation mechanisms.

- Malware and Remote Exploitation: Environments with poor update controls become targets for malicious actors seeking escalated compromise.

⚠️ Attention:

Unauthorised access to or exposure of data within corporate Deep Web systems can result in serious legal risks, information leakage, and operational impact for companies.

Protection Technologies

- Use end-to-end encryption across all communication segments, including TLS and digital certificates issued by trusted certification authorities.

- Deploy next-generation firewalls with deep packet inspection and context-based policies.

- Adopt multi-factor authentication and least-privilege access control.

- Continuously update software, firmware, and applications, combined with active vulnerability management.

- Monitor network traffic in real time to identify and mitigate anomalous activities.

Anonymity, Privacy, and Ethical Considerations

The Deep Web, by providing non-traceable access mechanisms and private environments, strengthens data privacy but also creates ethical and technical challenges. The use of anonymity technologies must be aligned with applicable legislation and responsible-use standards, avoiding loopholes for abuse or improper exposure.

The processing, storage, and access of sensitive data in the Deep Web must comply with the LGPD in Brazil, the GDPR in the European Union, and the guidelines of the ANPD (Brazilian Data Protection Authority), ensuring digital governance aligned with international best practices.

Compliance and Technical Standards in the Deep Web

- Structured Cabling: Physical infrastructure composed of pathways, spaces, dedicated entrances, and system separation follows technical standards to ensure isolation, integrity, and protection of non-indexed data flows.

- Systems Security: Access-control systems, physical and logical integrity of components, fault protection, and violation detection are mandatory in critical environments.

- Updates and Hardening: Adoption of continuous update policies, hardening of servers and critical segments, as well as updated antivirus and blocking of untrusted integrations.

Compliance with these guidelines not only ensures regulatory conformity but also raises the level of resilience against sophisticated attack and compromise scenarios.

- Access Control: Implementation of least privilege, logical segregation of environments, and constant auditing of permissions granted to users and third-party systems.

- Monitoring and Backup: Automatic backup solutions and periodic data copying in high-security environments are mandatory for critical, high-availability operations.

Deep Web and Electronic Security: Integration and Best Practices

The interface between Deep Web environments and electronic security systems is unavoidable in advanced corporate and building infrastructures. Access-control integrations, video monitoring, and intrusion-detection systems, when connected through protected Deep Web segments, require reinforced physical and logical integrity controls.

- Traffic Isolation: Ensuring that traffic between security devices and central systems flows through authenticated, encrypted, and monitored channels, mitigating risks of interception or manipulation.

- Hardening Policy: Continuous hardening of all connected devices, with firmware and critical application updates, as well as the use of trusted integration with approved management software.

- Monitoring and Resilience: Incident-detection and response systems integrated with Deep Web segments ensure rapid identification of threats and data recovery in the event of failure or hostile action.

💡 Technical Tip:

Implement multi-factor authentication policies and regularly monitor access logs to strengthen security in non-indexed environments.

Alignment with international physical and logical security standards is fundamental to maintaining the integrity, confidentiality, and availability of interconnected systems within this ecosystem.

The creation and maintenance of non-indexed corporate systems such as internal databases, intranets, and restricted platforms require adherence to infrastructure standards such as TIA/EIA-568 and ABNT NBR 14565, which define standards for structured cabling, system separation, and physical network protection.

Frequently Asked Questions

No, but it depends on how it is used. Unauthorised access to private information is a crime.

By using encryption, strong authentication, and continuous system updating.

Data leakage, attacks on authenticated systems, and traffic interception.

The Deep Web gathers all internet content that is not indexed by traditional search engines, such as databases, internal company systems, academic portals, and restricted website areas that require authentication. It is not something ‘secret’ by nature, but rather pages not openly accessible to the general public.

Yes, it is possible to access Deep Web content from a mobile phone, especially banking applications, email, corporate platforms, and systems that require login. However, access to the Dark Web, which is a subset of the Deep Web, requires specific apps and configurations, such as browsers compatible with Tor.

The Dark Web is a subset of the Deep Web that is accessible only through specialised protocols and software such as the Tor browser. It is not located in any single physical place but distributed across servers around the world, outside the reach of conventional search engines.

There is no Google for the Deep Web because its content is not indexed by traditional search engines. However, there are specialised search tools for some restricted areas, such as academic databases or internal portals. In the Dark Web, the best-known search option is DuckDuckGo, accessible via Tor, though its indexing is limited.

Conclusion

Understanding and properly managing the Deep Web requires deep technical knowledge of architecture, access protocols, network infrastructure, logical segregation, and physical and digital protection mechanisms. Non-indexed environments are essential for maintaining privacy, confidentiality, and control over strategic data, but they require rigorous information-security policies, regulatory alignment, and continuous hardening practices. The integration of critical systems with these environments demands standardised access controls, reinforced monitoring, and readiness for incident response, covering both cyber threats and operational risks.

In engineering and security projects, adopting solid models for segmentation, authentication, and encryption is decisive for effective protection in Deep Web environments. Given the pace of technological change, continuous updating of methodologies and tools is recommended, as well as appreciation for interdisciplinarity between network engineering, IT, and cybersecurity.

Final Considerations

Exploring the technical universe of the Deep Web reveals its strategic importance for private, corporate, and institutional environments, highlighting the need for advanced engineering and robust security practices. Continuous improvement of controls, aligned with recognised standards and frameworks, becomes a competitive differentiator for professionals and companies in the field.

Thank you for reading this technical article. We invite everyone interested to follow A3A Engenharia de Sistemas on social media for more specialised content and authoritative updates on Systems Engineering, Networking, Security, and IT.

Normative References

ISO/IEC 27001 – Information Security Management

ISO/IEC 27002 – Security Controls

ISO/IEC 27005 – Risk Management

TIA/EIA-568 – Structured Cabling

ABNT NBR 14565 – Telecommunications Cabling Infrastructure

LGPD – Brazilian General Data Protection Law

GDPR – General Data Protection Regulation (EU)

ABNT NBR ISO/IEC 27001 – Information Security Management

ABNT NBR ISO/IEC 27005 – Risk Management

CERT.br – Brazilian Computer Security Incident Response Center

NIST Cybersecurity Framework – National Institute of Standards and Technology

Relevant Links (Complementary Technical Materials)

What Is the NIST Cybersecurity Framework?

Corporate Compliance: Technical Definition, Benefits, and Implementation Strategies