Understand what video metadata are, how they are generated, where they are used, and how they support intelligent video monitoring systems.

Check it out!

In the context of Video Monitoring, metadata are textual descriptions that detail the visual content of a video.

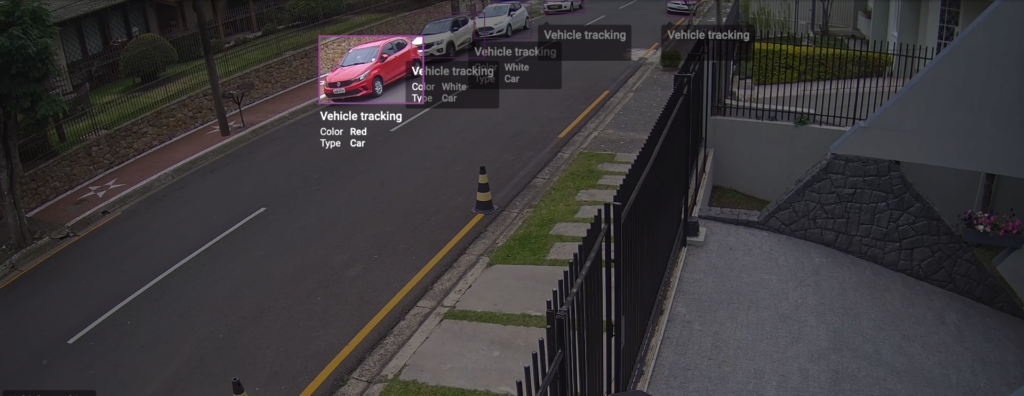

Metadata can provide a wide variety of useful information. They can offer a detailed description of a situation, identify important objects that are visible, and provide information about specific characteristics associated with a scene.

Metadata give context to events, allowing large volumes of recordings to be organized and quickly accessed in searches. They can include details such as car and clothing colors, exact object locations, or the direction of movement, for example.

That is why understanding how to work with metadata has become increasingly important to ensure security, protection, and efficiency in business operations.

In this article, we will discuss metadata in the context of monitoring and operational efficiency, detailing how metadata work and how they are used to bring intelligence to monitoring systems.

Let’s take a look.

[elementor-template id=”24446″]

What Is Video Metadata?

Metadata are sets of structured information that describe, locate, or facilitate the retrieval, use, or management of an information resource.

They can be classified into three different types: administrative, structural, and descriptive:

- Administrative metadata provide information that helps manage a resource, such as when and how it was created, the file type, and who can access it;

- Structural metadata detail how the components of a resource are organized;

- Descriptive metadata provide information that helps discover and identify other data.

In a CCTV System, metadata provide a textual description of the video content, identifying objects of interest or supplying a detailed description of the scene.

How Is Video Metadata Generated?

Metadata are generated in real time through Video Analytics. Analytics are algorithms that can run directly on the camera or on a dedicated server.

Until recently, video analysis was performed exclusively on servers, since it required a level of processing that edge devices could not support.

With the increase in camera processing capacity over recent years, it has become possible to perform advanced analysis directly at the edge.

Edge analytics tools have access to uncompressed video and extremely low latency. This makes fast, real-time use possible while avoiding the additional costs and complexity associated with transferring all video content for processing elsewhere in the system.

However, it is important to note that cameras equipped with enough processing power to perform edge analytics usually come at a higher cost. This introduces an important consideration when designing a Video Monitoring System.

The designer must decide whether to invest in cameras with greater processing capacity or allocate resources to a dedicated server to process video analytics.

This decision should be based on a careful return-on-investment analysis, taking into account factors such as the desired system performance, the available budget, and the specific needs of the project.

The ideal choice may vary depending on the specific circumstances of each project.

Where Is Metadata Used?

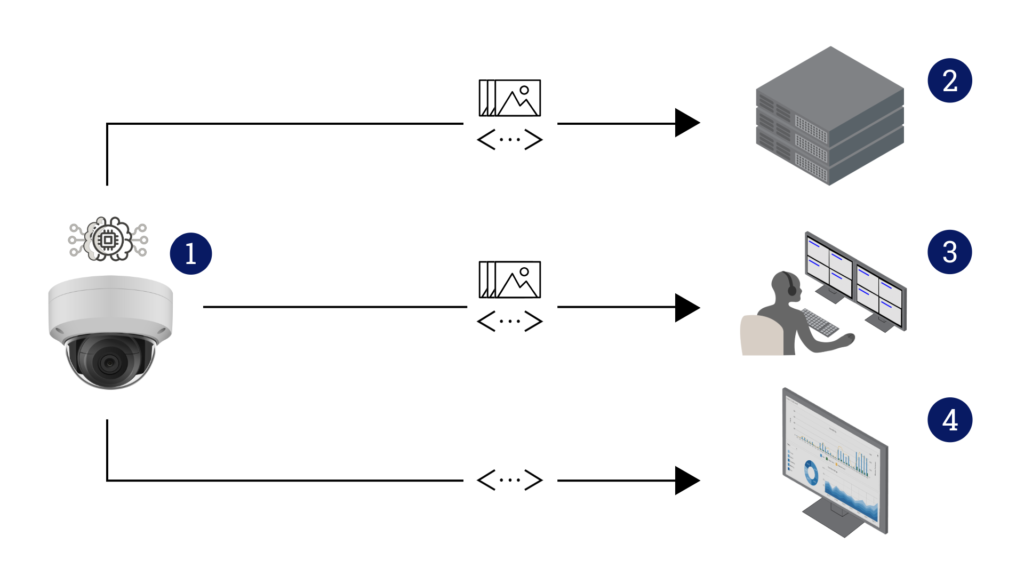

The main “metadata consumers” can be categorized as follows:

- Edge Applications;

- Hybrid Processing Applications;

- Video Management Systems (VMS);

- Dashboards.

Edge Applications

Edge Applications refer to the use of analytics tools that run directly on the camera. These tools can apply filters and logical rules to process information related to objects detected in the scene.

These edge analytics tools can trigger specific actions based on predefined events or identified behaviors. For example, they can control a PTZ (Pan-Tilt-Zoom) camera to follow the movement of a person detected in the scene.

The evolution of edge computing has enabled the use of advanced tools such as Artificial Intelligence (AI), Machine Learning, and Deep Learning inside the cameras themselves. This represents a significant milestone in how we process and interpret data.

In addition, cameras equipped with edge processing capabilities can store video directly on the camera through an SD card, increasing system reliability and enabling high-performance system applications in remote regions.

Hybrid Processing Applications

Hybrid Processing Applications represent a model in which edge processing (on the camera) and server-side processing are combined to perform more advanced analyses.

In this model, preprocessing is usually performed on the camera itself. This can include tasks such as initial object detection, filtering, and execution of basic analyses.

Additional processing is then performed on the server. This can involve more complex analyses that require more processing power or storage capacity than a camera can provide.

For example, this may include correlating data from multiple cameras, performing long-term analysis, or applying more advanced machine learning algorithms.

Video Management Systems (VMS)

A Video Management System (VMS) plays a fundamental role in the context of Intelligent Video Monitoring. It acts as the control center of the system, enabling the reception, processing, and storage of images and videos from IP cameras.

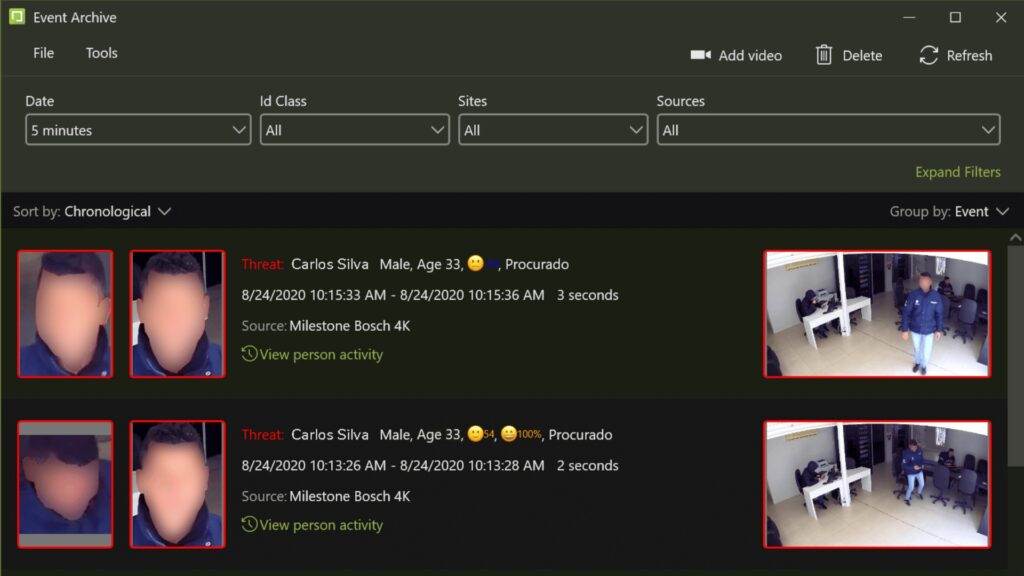

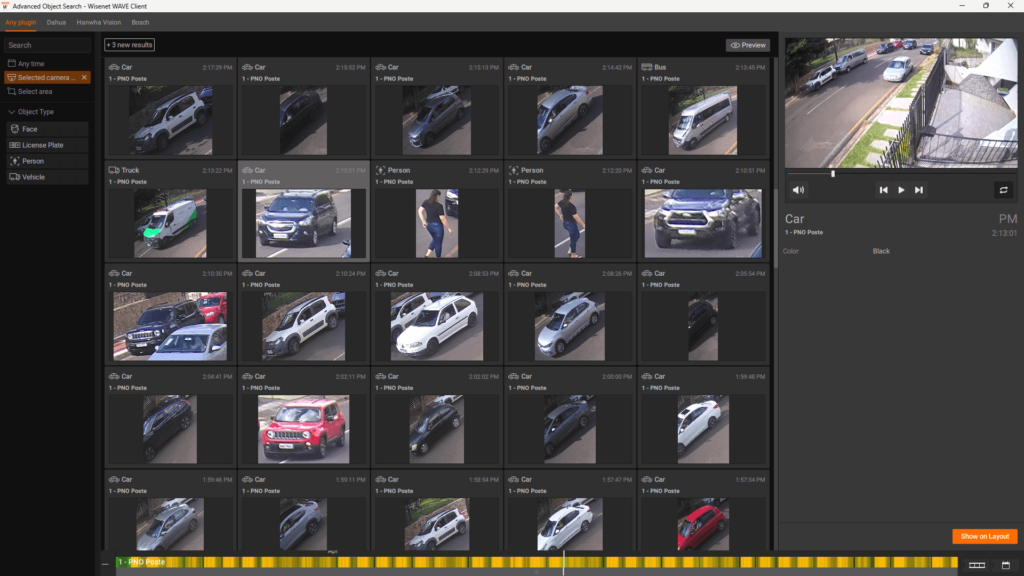

The VMS is able to integrate all advanced features into a single platform. This includes technologies such as facial recognition, motion detection, and behavior analysis, expanding the capabilities of the monitoring system.

One of the main capabilities of a VMS is advanced search. It has search filters that allow operators to quickly locate specific events in recurring recordings. Search criteria can be defined using metadata such as date, time, camera, event type, object color, and movement direction, for example.

In addition, a VMS has robust user and permission management features. This allows administrators to create user profiles with different access and control levels, ensuring that only authorized individuals have access to cameras and recordings. This reinforces system security and privacy.

Finally, a VMS can integrate with other security systems, such as access control and alarm systems. This provides a unified view of events, improving the effectiveness and efficiency of the security system.

Dashboards

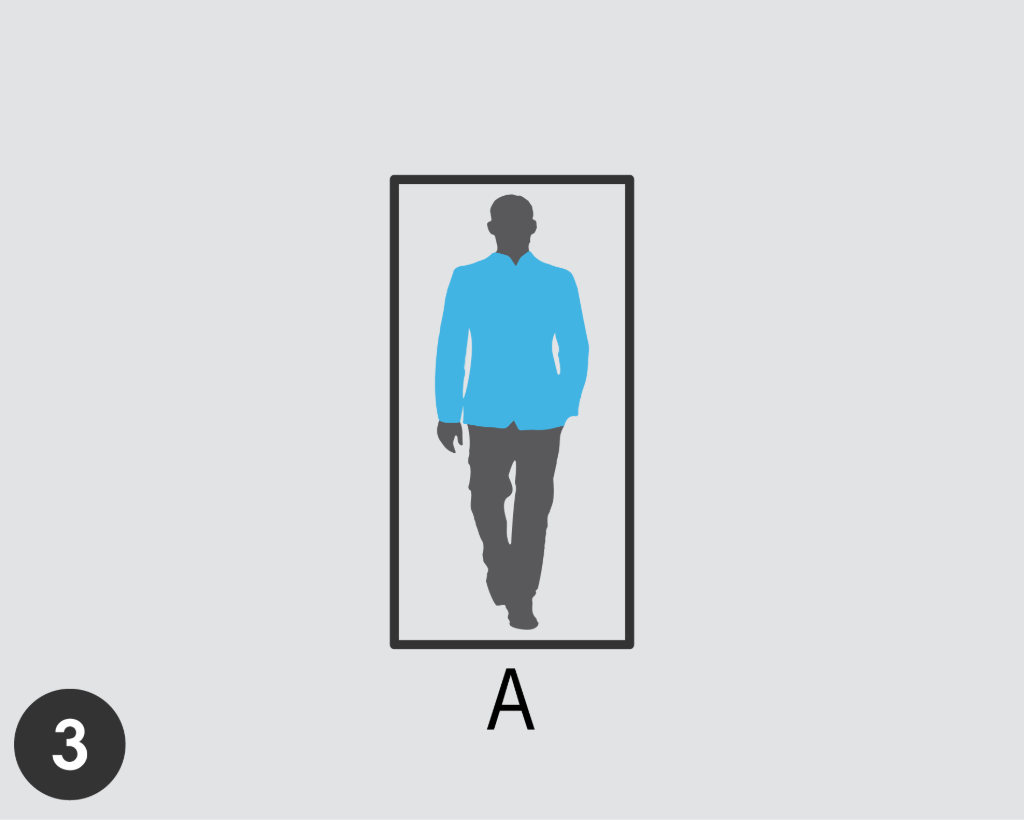

Dashboards are Business Intelligence platforms. They receive and organize metadata to facilitate trend analysis, both historical and real-time.

These dashboards use statistical analysis based on collected data, such as customer flow or the overall customer experience within a facility. These analyses provide valuable insights that can guide data-driven decisions, resulting in more efficient and effective operations.

The metadata collected by dashboards can cover a wide range of information, from customer behavior details to system performance metrics. These data are then processed and presented in an intuitively visual way, allowing users to quickly identify patterns, trends, and anomalies.

In addition, dashboards can be customized to meet the specific needs of each organization. They can be configured to track specific metrics, provide real-time alerts, and even integrate data from multiple sources for a more complete view.

How Is Metadata Transmitted?

Metadata generated in CCTV Systems can be delivered in two distinct ways, depending on the context and the needs of the system that will consume this information:

Real-time transmission

In this method, a complete description of the scene is provided, covering all frames, even when there is no activity or no objects present.

Metadata are sent continuously and on demand. This is essential in situations that require an immediate response and accurate situational awareness.

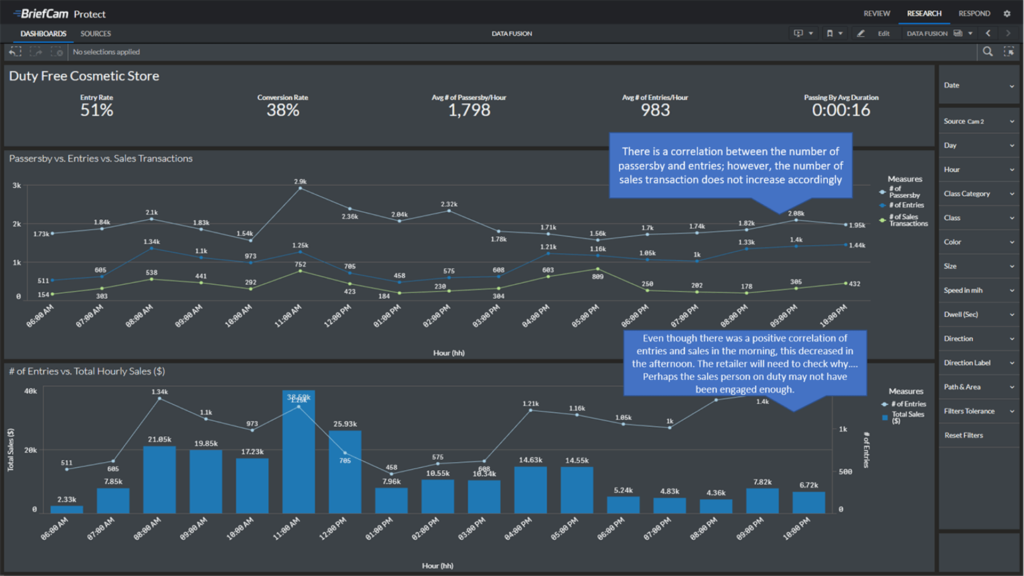

This figure illustrates the metadata flow, where continuous frames from the camera provide real-time information about the scene. Each frame captures the scene at a specific moment, regardless of past events.

In Frame 1, objects A and B are detected, classifying A as a person wearing red clothing and B as a person wearing blue clothing.

In Frame 2, the camera updates the classification, determining that object A is actually wearing blue clothing and object B is wearing yellow clothing. Although the objects are the same as in Frame 1, their color attributes change and this is reflected in the metadata.

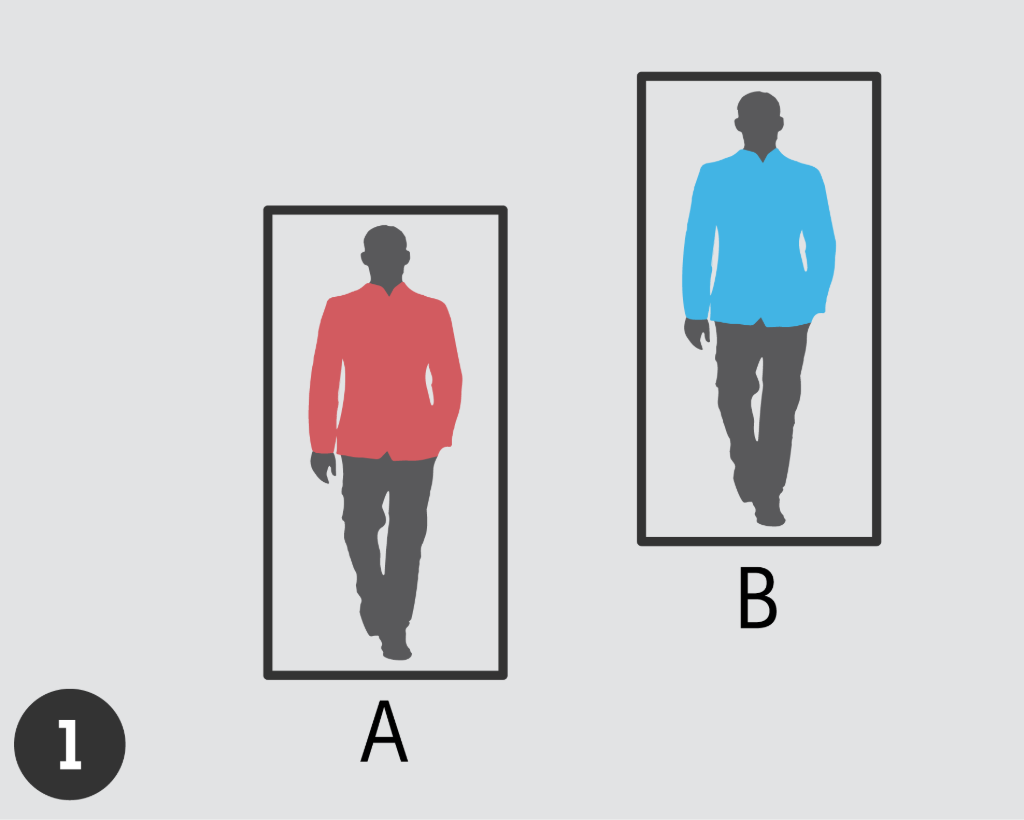

Frame 3 shows the absence of object B, with the camera tracking only object A, still classified as a person wearing blue clothing.

Optimized delivery

In this approach, metadata related to each specific object in the scene are combined into a single entity, a process that can be referred to as aggregation.

This means that instead of treating each instance of each object as a separate entity, all instances of the same object are treated as a single entity. This significantly reduces the total amount of data that needs to be stored and processed, resulting in more efficient use of system resources.

In addition, with optimized delivery, metadata are provided only when there are objects present in the scene. This avoids transmission of unnecessary data and ensures that only the most relevant information is delivered to the end user.

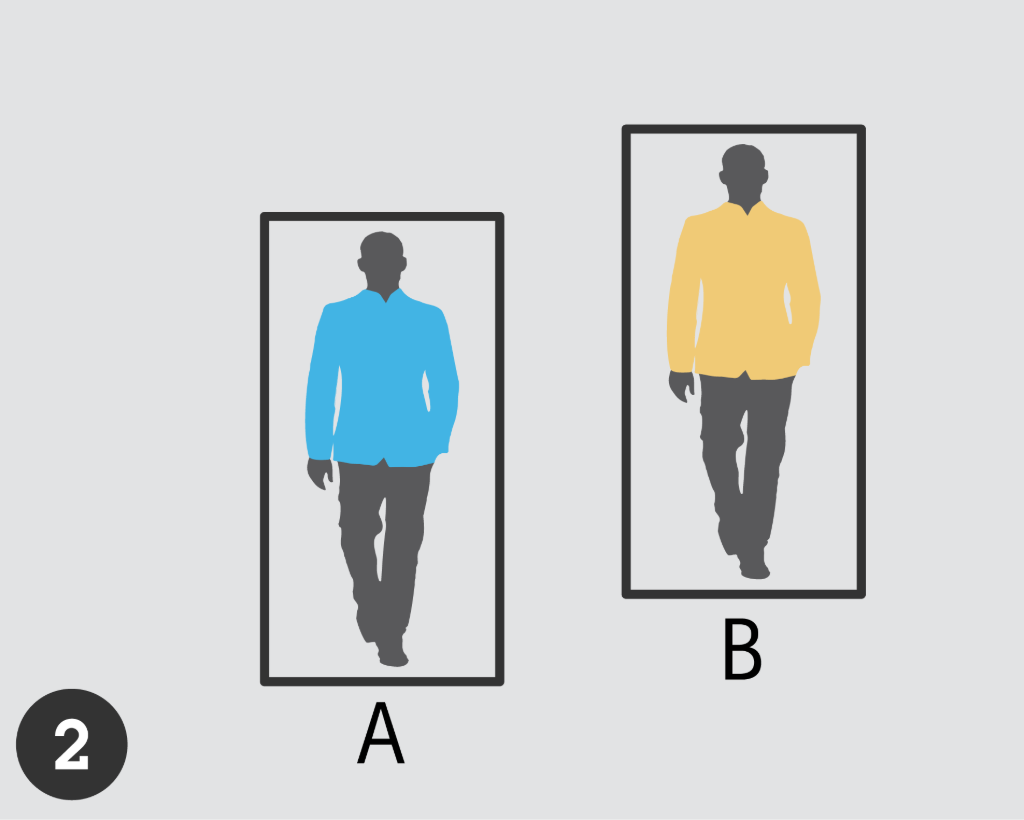

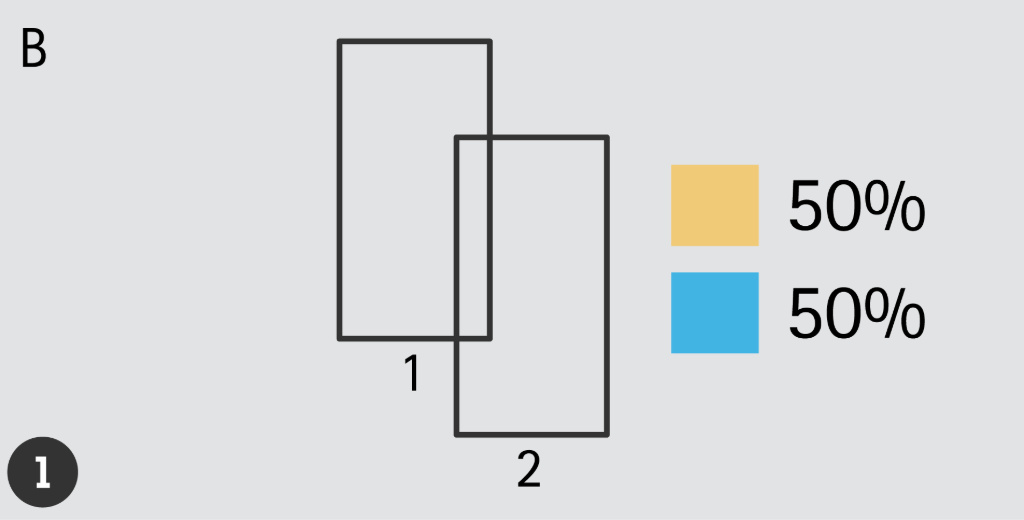

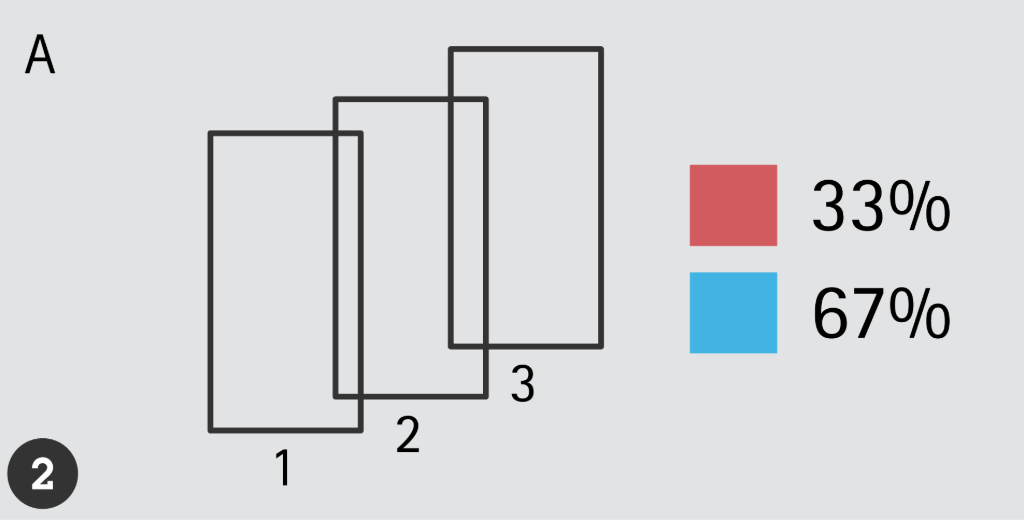

This figure demonstrates optimized metadata delivery, where the camera provides information in a unified format based on detected object tracking in the scene. The frames for each object include all known details throughout the object’s tracking lifetime.

In the first frame, details about object B are presented, including its first and last detection, trajectory summary, and attributes detected during tracking. Object B had a 50% probability of wearing yellow clothing and a 50% probability of wearing blue clothing.

The second frame mirrors this format for object A, revealing a 33% probability of red clothing and a 67% probability of blue clothing.

Understanding the advantages and disadvantages of each approach is essential for designing the system architecture.

In addition, metadata can be delivered through various communication protocols and file formats, depending on the specific needs and preferences of the consuming system.

Combining Metadata from Different Sources

Integrating metadata from multiple sources is a powerful strategy that maximizes the potential of metadata.

When applied to various data sources, visual, auditory, activity, and process data, metadata can provide valuable insights for the effective management of any site.

Data sources such as RFID tracking, GPS coordinates, alarm events, meter readings such as temperature or chemical levels, noise detection, and point-of-sale transactional data are examples of metadata sources that can be integrated. The key to this integration is aligning the data from all sources according to their date and time records.

Combining metadata from different sources results in a more complete and richer view than what can be obtained from each source in isolation. This enables more qualified insights and more efficient decision-making.

Conclusion

In conclusion, we have seen that metadata play a crucial role in optimizing Security and Operational Management.

Whether through real-time delivery for immediate responses, optimized delivery for a broader overview, or the combination of metadata from multiple sources for a more complete perspective, metadata are transforming the way we manage and interpret information.

As we continue exploring and innovating in this area, we can expect even more improvements in the efficiency, effectiveness, and capability of Video Monitoring Systems.