Understand what the World Wide Web is, how it differs from the Internet, and how Surface Web, Deep Web, and Dark Web fit into the architecture of the Web.

Check it out!

The World Wide Web, also known as the Web, “WWW“, or “W3“, is a global information system that has been fundamental to democratising access to knowledge.

In this article, we explore the architecture of the World Wide Web and its main components, analysing its structure in layers.

Read on!

[elementor-template id=”24446″]

What Is the World Wide Web?

Contrary to what many people think, the Web and the Internet are not synonyms.

The Web is one of the many services made available through the Internet, alongside email, videoconferencing applications, and online games.

Developed in 1989 by Tim Berners-Lee, the World Wide Web is a distributed information system based on hypermedia that organises and distributes electronic documents using the infrastructure of the Internet.

How Does the World Wide Web Work?

Through the concept of hypermedia, the Web allows users to navigate between different resources, forming a network of interconnected “files” that can be accessed from virtually anywhere in the world.

The Concept of Hypermedia

Hypermedia is a system for organising information that allows documents to be built and interconnected through embedded references.

Unlike a linear reading structure, such as a book or a conventional document, hypertext gives the user the ability to move between different documents and sections in a non-sequential way, creating an exploratory experience based on associations between content and concepts.

When a user clicks a link, the browser interprets that reference and directs the user to the corresponding document or resource.

This navigational structure is what forms the interconnected “web” of information that defines the World Wide Web, enabling dynamic and decentralised access to distributed data.

Client-Server Architecture

The Web operates on a client-server architecture model in which browsers (clients) send requests and Web servers process those requests, returning the corresponding documents or data.

Browsers

Browsers work as the interaction interface between users and Web resources.

When accessing a website, the browser goes through a series of operations to retrieve and display the content in a way that is understandable and interactive for the user.

This process involves interpreting a range of instructions and commands embedded in the content, which define how each element will be displayed, how items will be positioned on the screen, and which interactions are available to the user.

Web Servers

Web servers store and distribute the resources requested by browsers.

They process requests and provide the necessary data, which may include pages, images, videos, and dynamic data generated by Web applications.

Transfer Protocols

The Hypertext Transfer Protocol (HTTP) is the foundation of communication on the Web, structuring the exchange of data between clients and servers.

Each request and response follows a specific pattern that includes headers, request methods, and response codes indicating the status of the operation.

Resource Identification

Each document or resource on the Web is identified by a URL (Uniform Resource Locator), which specifies the address of a resource and allows the browser to locate it.

The structure of URLs includes the protocol, the domain, and the path to the resource, all of which are essential for content organisation and navigation.

Domain Name System (DNS)

The Domain Name System is responsible for translating domain names into IP addresses, which identify servers on the network.

When a user types a Web address, DNS resolves the domain name into its corresponding IP address, allowing the browser to locate and access the correct server.

Layered Structure

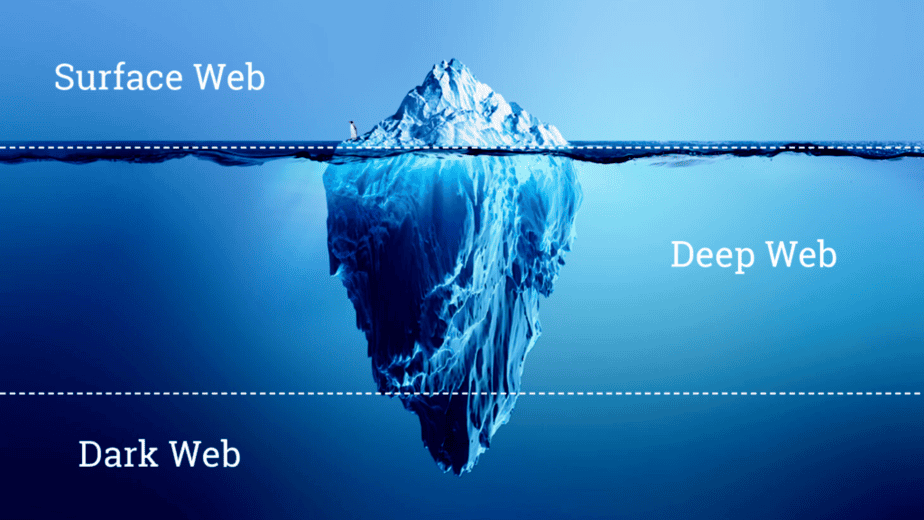

The Internet contains millions of Web pages, databases, and servers operating 24 hours a day. But the so-called “visible” Internet, also referred to as the surface or open Web, consisting of websites found by search engines such as Google and Yahoo, is only the tip of the iceberg.

Surface Web

The Surface Web, or Open Web, is the “visible” layer.

From a statistical point of view, this set of sites and data accounts for less than 5% of the entire Internet.

Surface Web sites can be found because search engines are able to index pages through visible links, a process known as “crawling”, named after the way search engines move through the Web like a spider.

Deep Web

The Deep Web refers to the part of the Web that is not indexed by conventional search engines.

To access sites on the Deep Web, it is necessary to know the specific address of the page, since those sites do not appear in search results.

A common example is the login page of a website control panel used for maintenance and the addition of new content. Although access to this page is restricted by login credentials, indexing it could potentially facilitate brute-force attacks, in which repeated login and password attempts are made in order to gain unauthorised access.

For that reason, it is preferable for such a login page to be of interest only to the site collaborators responsible for adding content and to remain outside the reach of search engine crawlers.

A website may also become part of the Deep Web if search engines, based on their own criteria, determine that the site contains inappropriate content and decide not to index it.

Dark Web

The Dark Web is a part of the Deep Web where you will find content that requires a mechanism different from the standard one used to access the Internet.

This includes content protected by layers of anonymity and security to prevent the identification of who is accessing it and where the information is stored.

The Dark Web uses its own anonymity and encryption system and requires specialised browsers for access, such as Tor.

The Evolution of the Web

The trajectory of the Web can be divided into distinct evolutionary phases that reflect technological advances, shifts in interaction paradigms, and the growing involvement of users in creating and consuming content.

Web 1.0

Web 1.0 represents the first stage of the Web’s evolution and is often referred to as the “static Web”.

It was characterised by the predominance of static pages and essentially informational content, created and maintained by a limited number of developers. At that stage, user interaction was minimal and largely restricted to navigating and reading information.

The communication architecture was one-way: from server to client.

Elements such as hyperlinks and simple HTML pages dominated, and concepts of interactivity were practically non-existent. This phase provided the structural basis for the browsing protocols and client-server model that would drive subsequent developments.

Web 2.0

Web 2.0 marks a significant change in the way users interact with the Web.

Emerging in the mid-2000s, Web 2.0 introduced a participatory and collaborative dynamic.

Interactive applications and social media platforms, such as blogs and wikis, emerged, allowing users not only to consume content but also to create and share it.

This evolution was supported by technologies such as AJAX, which enabled asynchronous updates and a more fluid and responsive user experience.

The architecture of the Web became bidirectional, with servers and clients exchanging information in real time.

This phase also saw the emergence of business models based on user data and targeted advertising, expanding the Web beyond a simple repository of information.

Web 3.0

Web 3.0, also known as the “Semantic Web”, represents an advance in the Web’s ability to understand and process information in a way that resembles human reasoning.

Its goal is to make data on the Web more accessible and interconnected through technologies such as artificial intelligence, machine learning, and natural language processing.

Web 3.0 aims to improve search accuracy and result relevance by interpreting the context and meaning behind queries.

Another fundamental aspect of this phase is decentralisation, often associated with the use of blockchain technologies to create an internet that is more secure and less dependent on large centralised entities.